Next week we’re working on a sound score for Shobana Jeyasingh Dance in a research and development project with King’s College London and the Bartlett School of Architecture, UCL. The project brings together choreography, robotics theory from KCL Informatics, and mechanical design from the Bartlett’s Interactive Architecture Lab.

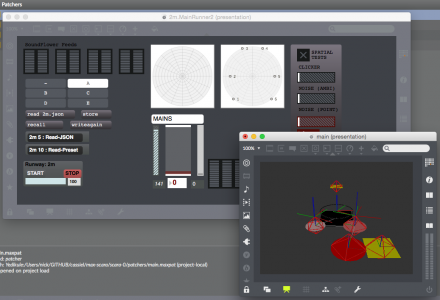

The sound score is still on the drawing board, but will be the usual mix of Cycling ’74 Max and Ableton Live, with a bit of Max’s physics engine thrown in to explore some ideas of robotic underactuation. The audio setup will also be six-speaker ambisonic for a bit of surround-sound goodness.

The venue is the KCL Anatomy Museum, and it will be open to the public on Friday 3rd July, 2-4pm.

More information from the Interactive Architecture Lab, and a preview article at Dance UK.